The end of history: were we all thinking like Fukuyama in the 1990s?

"The End of History and the Last Man" made many people feel we'd entered a uniquely transformative period, severed from the past. Is that how it was?

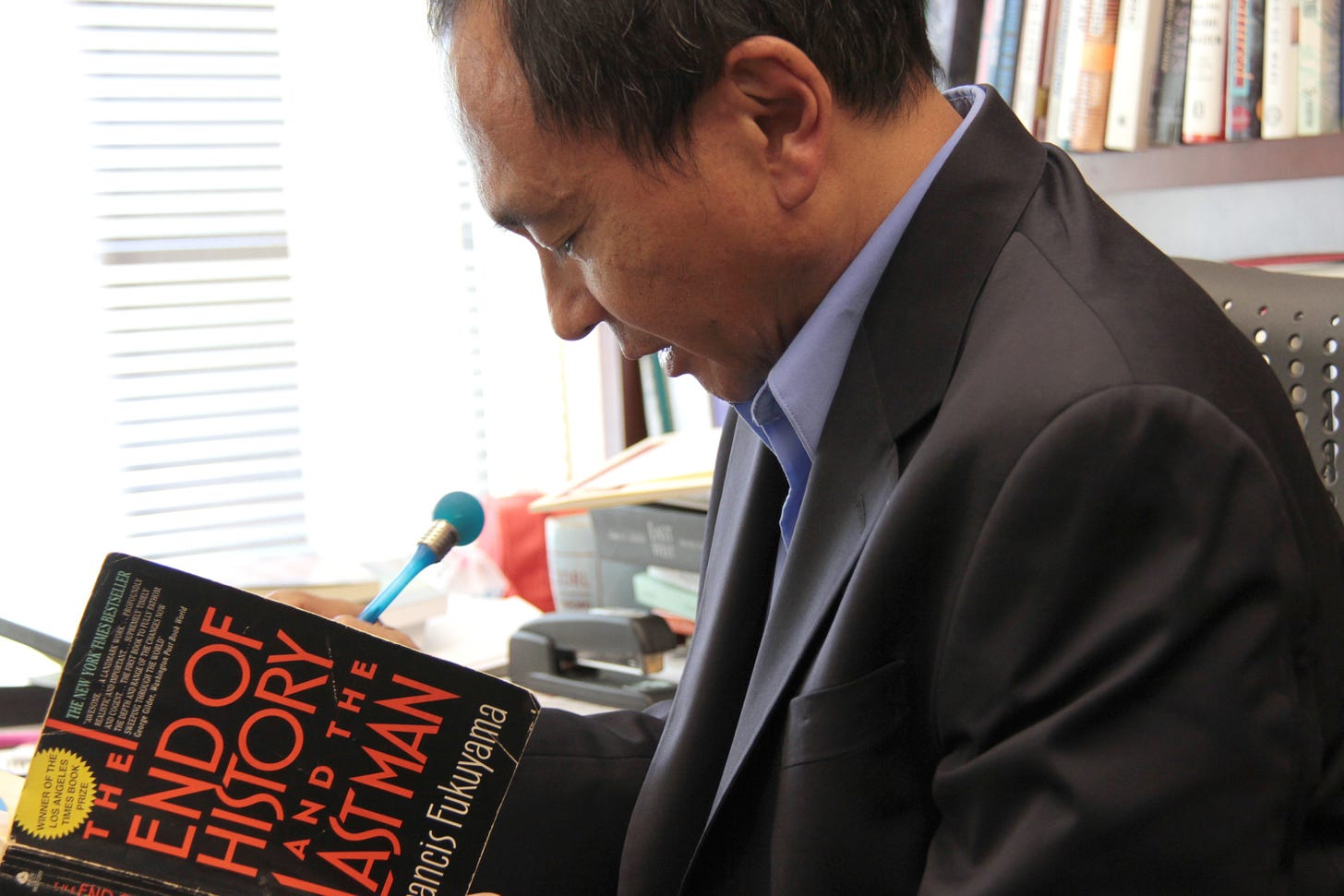

I don’t know whether Francis Fukuyama regrets his 1992 volume The End of History and the Last Man, which is now used as virtually the acme for overweeningly confident predictions about the future and the stability of the present. More likely, as he is no fool, the Stanford senior fellow has long since realised that there is as much mileage in having been catastrophically and publicly wrong about something as there is about getting it right. He is still a hugely respected geopolitics scholar, and it’s worth remembering that when The End of History was published, most of us thought he was on the money.

Fukuyama was brought to my mind as I read an excellent essay, “The End of Mystery”, by the delightful historian of religion and belief Dr Francis Young. He has many strings to his bow: historian, folklorist, indexer, lay canon at St Edmundsbury Cathedral in Suffolk, Baltic history obsessive. His essay argues against what he calls “temporal particularism”, the belief that the time we are living in is unique and unprecedented and special, of which The End of History is the loudest affirmation. Instead, because of his sense of history and his faith, he argues that we should see ourselves much more strongly connected to the past and as part of a continuum of human existence.

I agree with almost everything he says, but it made me think about my own perception of Fukuyama’s glory days. I think I am a little older than Young but not much, our interests overlap at several points and we write for many of the same publications, and he is always an interesting writer. More significantly here, we must both have been teenagers for much of the 1990s, which is why his essay so readily made me think of my own experiences and my reaction to the Fukuyama school, such as it was.

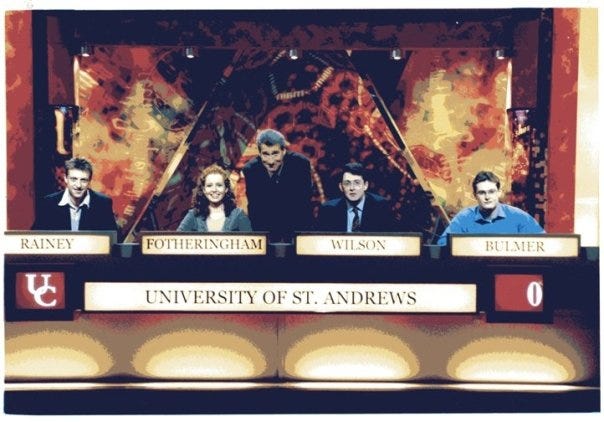

Some basic chronology: I sat my GCSEs in 1992, my A-levels in 1994, went up to Oxford for an academically disastrous year at the end of which I withdrew, spent 1995-95 doing a lot of not very much then went to St Andrews in September 1996 and graduated in history in June 2000. So the context of the 1990s for me was education and learning, even if I singularly failed to absorb some basic lessons. It is also worth saying that I had always been fascinated by politics but this was the decade in which I came to understand the issue properly. I remember unexpected delight when John Major won the general election of April 1992, the grim years of sleaze and division as the Conservative Party disintegrated and (in retrospect) Sir Tony Blair and Lord Mandelson, with others, painstakingly constructed New Labour in order to win the landslide victory of 1 May 1997.

That was the first election in which I voted, and I voted for the Conservative and Unionist candidate in North East Fife, the Honourable Adam Bruce, who lost by furlongs—10,356 votes compared to his predecessor Mary Scanlon’s deficit of 3,308 five years before—to Menzies Campbell. (Bruce is now a herald, fittingly. Many years later I would work for Sir Menzies, as he was by then, when he was leader of the UK delegation to the NATO Parliamentary Assembly and I was its secretary. We got on very well and his late wife Elspeth was an amazing woman.) The end of that decade, then, in domestic political terms, was a barren one for Conservatives. The party lost all its seats in Scotland and Wales and experienced such a crisis of identity north of the border that options like separating from the UK party and changing its name were considered.

My horizons were not confined to domestic politics, though. The decade had begun with Saddam Hussein’s invasion of Kuwait in August 1990 and its subsequent liberation in the first Gulf War—Operation Desert Storm for the Americans, Operation Granby for UK forces—and by chance I had been bedridden with chicken pox when the air war had begun on 16 January 1991. It was the first conflict in which journalists were able to report live from the front line, with CNN, still very much television minnows behind CBS, NBC and ABC, coming to the fore. There was also much less censorship of the news than the United States had been able to exercise in Vietnam 20 years before. So I, a 13-year-old boy obsessed with soldiers, lay spellbound and watched the first video game war unfold.

This acted as a kind of plunge pool of foreign reporting. At that stage I didn’t understand everything I was taking in, but I soon would. That summer, Boris Yeltsin won the first ever presidential election in the Russian Soviet Federative Socialist Republic, the largest constituent member of the Union of Soviet Socialist Republics; in August, communist hardliners launched an unsuccessful coup against Mikhail Gorbachev, hanging on as president of the USSR; and at the end of the year, the USSR was dissolved. It was perhaps the inevitable denouement of what had begun perhaps with the Pan-European Picnic in August 1989, but it was still epochal.

Meanwhile Yugoslavia began to fall apart in 1991 and would be wracked by vicious ethnic conflict for the rest of the decade. The international community would disgrace itself by failing to do anything to prevent the Rwandan genocide in April to July 1994, which may have claimed one million lives. In 1995 the United Nations chaotic mission in Somalia was withdrawn with little to show for its two-year presence. That November, prime minister of Israel Yitzhak Rabin was murdered by a Jewish extremist, plunging the Middle East peace process into doubt. On 1 July 1997 the United Kingdom handed Hong Kong to the People’s Republic of China. There was, I would argue, a lot going on.

All of this means that, for all the renown which Fukuyama attracted for his book (which is very good and bears re-reading), and the idea of a “brave new world” in which liberal democracy would inevitably triumph and authoritarianism was staggering to its death, it didn’t feel like that to me. It is true that I felt, like many people, a generalised sense of optimism at the West’s “victory” in the Cold War. The period before 1991 had been so starkly polarised, presented so straightforwardly as a struggle between good and evil, that it was hard not to feel virtue had overcome vice.

I was a stereotypically cynical teenager, imagining that a sardonic sneer at everything represented the height of sophistication and maturity, and I made a point of being clear-sighted about “the West”. I thought Robin Cook’s “ethical foreign policy”, shouted from the rooftops after Labour came to power in 1997, was all pose and cant, and argued that the world was a place in which we sometimes had to hold our noses and do business with bad people rather than worse people. I was unexcited by the moral absolutes of the arms to Iraq affair, and the inquiry which followed under Lord Justice of Appeal Sir Richard Scott, and saw it largely in terms of the parliamentary battle between Robin Cook and Ian Lang after its publication. Cook, with hardly any time to prepare, was superb, but Lang, a dryly witty figure who had been a member of the Cambridge Footlights at university, put up a strong and resilient defence which probably defused the issue for a while.

Nevertheless, I did think that the defeat of Communism, the liberation of Eastern Europe and the decline and fall of the USSR were plainly good outcomes. Freedom and democracy had won, and I still defy anyone who would argue otherwise to look at the sheer, elemental joy of those Berliners who pulled down the wall in parts by hand; at the ousting of the military government in Poland and its replacement with a non-communist coalition dominated by Lech Wałęsa’s Solidarność; at the Velvet Revolution in Czechoslovakia in November 1989. These were people who had lived the reality of Communism and the heavy hand of the Soviet Union, and of the Brezhnev Doctrine which justified it. It has always struck me as the height of “not a good look”, as the phrase has it, for Western intellectuals who enjoyed the freedoms we take for granted to tell those who had lived behind the Iron Curtain that, actually, it hadn’t been nearly so bad as they thought.

On that analogy, however, it is also not my purpose to tell other people, especially those older and more worldly, what they actually felt during the Fukuyama years, or to say they were fools or knaves. I do, though, return to the point, having dug deep into the trenches and test pits of my memory, that I was not overwhelmed by a geopolitical sense of discontinuity.

I was certainly aware of change. The first Gulf War had demonstrates to the lay public how extraordinary sophisticated military technology had become. Journalists in Baghdad could film cruise missiles passing their hotels on the way to specific targets, and the grainy black-and-white camera footage from precision-guided munitions showed that individual buildings and even armoured vehicles could be targeted, making air strikes not only more effective but also reducing the potential for civilian casualties. This impressive display allowed us to ignore the fact that the vast majority of munitions dropped during the conflict, more than 92 per cent, were “dumb” bombs, and that the Soviet-supplied Scud-B tactical ballistic missiles to which Iraq resorted in desperation were outdated and not especially stable or accurate.

Operation Desert Storm also saw the last hurrah for the largest of capital ships: USS Missouri fired her main 16-inch guns in combat for the first time since the Korean War when she bombarded an Iraqi command and control centre near the border with Saudi Arabia. By the end of combat operations, the 47-year-old “Mighty Mo” had fired 783 rounds of main-gun ammunition, each projectile weighing 2,000 pounds and able to be hurled a dozen miles. It was magnificent, but it wasn’t (modern) war.

The increasing accuracy and potency of aerial munitions coincided, with great significance, with the emerging doctrine of liberal interventionism. Its origins were far in the past. In March 1848, Viscount Palmerston, foreign secretary in Lord John Russell’s Whig administration, had told the House of Commons:

I hold that the real policy of England—apart from questions which involve her own particular interests, political or commercial—is to be the champion of justice and right; pursuing that course with moderation and prudence, not becoming the Quixote of the world, but giving the weight of her moral sanction and support wherever she thinks that justice is, and wherever she thinks that wrong has been done.

Palmerston, of course, is remembered as the champion of “gunboat diplomacy”, but the idea of a nation enforcing its values and preventing injustices around the world gained new currency after the apparent liberal democratic victory of the Cold War. After the defeat of Iraq, United Nations Security Council Resolution 688 condemned Saddam Hussein’s repression of the Kurdish population in northern Iraq and a no-fly zone north of the 36th parallel was established to exclude Iraqi aircraft, policed by coalition air power and, where necessary, air and missile strikes on Iraqi military installations and units.

It seemed, suddenly, as if foreign policy could be conducted in a hard-edged kinetic way purely from the skies, without the risks or commitment of a ground campaign. It was a strange, unexpected vindication of Stanley Baldwin’s warning to the House of Commons in 1932 that “the bomber will always get through”, and it seemed almost infallible. In August and September 1995, Operation Deliberate Force saw sustained NATO air strikes help protect the UN safe havens in Bosnia and Herzegovina, and Operation Allied Force (March to June 1999) forced the withdrawal of the Yugoslav Army from Kosovo, allowing the creation of UNMIK, the United Nations peacekeeping force. In a speech to the Economic Club of Chicago in April 1999, Tony Blair articulated this policy, which became known as the Blair Doctrine.

The principle of non-interference must be qualified in important respects. Acts of genocide can never be a purely internal matter. When oppression produces massive flows of refugees which unsettle neighbouring countries then they can properly be described as “threats to international peace and security”.

During the 1990s this really seemed achievable, practical and morally sound. Many now take a different view, but in my recollection it was the direction of travel for a post-Cold War West: our defeat of the Soviet Union and the subsequent liberation of Eastern Europe had been a strategic success but it had also been a victory for values. The logical next step was the export those values around the world, not necessarily imposing them by force, but certainly using military might to stop actions which were an affront to them. No-one proposed bombing Saudi Arabia to enforce democratic elections or women’s rights, but if air power could stop literal genocide as was being perpetrated in the Balkans and attempted in northern Iraq, then that option was on the table.

The other prevalent belief, I suppose, was that the end of the Cold War and the removal of the threat of the Warsaw Pact meant that by definition we could reduce the size, and therefore the cost, of our armed forces. In 1990, the Royal Navy, the Army and the Royal Air Force combined amounted to 308,000 personnel; by 2000 that was 212,500. Equally, at the beginning of the decade, the government spent 4.1 per cent of gross domestic product on defence, which had fallen to 2.5 per cent by 2000. The armed forces were scaled down and restructured under the Options for Change review in 1990 and more cuts came in 1994 with Front Line First, and the Strategic Defence Review of 1998 did not reverse the direction of travel. But the UK was not alone or singularly foolish in this: there was a general belief in what President George H.W. Bush dubbed the “peace dividend”, the expectation that defence spending could, would and should fall after our “victory”. Like liberal interventionism, it has not aged entirely well, but there were not many high-profile dissenters at the time.

There was a sense of optimism in the 1990s, and it is not hard to explain, even justify. The West won the Cold War, allowing six Eastern European nations to institute at least some form of democracy (seven after the Czech Republic and Slovakia parted company in 1992). Germany was reunified, or rather the former German Democratic Republic was absorbed into the Federal Republic of Germany. The break-up of the Soviet Union saw Russia become somewhat liberalised, though democratic institutions never really took root, and saw Estonia, Latvia, Lithuania, Ukraine, Moldova, Georgia, arguably Armenia and even for a time Azerbaijan to become independent states of some democratic nature. Croatia and Slovenia emerged relatively quickly from the ruins of the former Yugoslavia as liberal democracies. These transitions were rarely complete or perfect, and in some cases were reversed, but it amounted to hundreds of millions of people living in greater freedom than they had done.

There were specific reasons to feel upbeat in Britain. After the global recession of the early 1990s, the United Kingdom’s recovery was strong. Unemployment peaked at 10.4 per cent in 1993 but then fell sharply to 5.6 per cent at the end of the decade, and GDP increased by about 60 per cent. It’s hard to imagine now, but when Labour came to power in 1997, the government was running a fiscal surplus.

It was also more intangibly a time when being British felt good. Investment in British cinema soared, multiplying many times over, leaving the decade with distinctive, confident, quirky films like The Remains of the Day (1993), Four Weddings and a Funeral (1994), The Madness of King George (1994), Shallow Grave (1994), Sense and Sensibility (1995), Trainspotting (1996), Bean (1997), Elizabeth (1998), Shakespeare in Love (1998) and Notting Hill (1999). Daniel Day-Lewis, Jeremy Irons, Nick Park, John Barry, Anthony Hopkins, Emma Thompson, Anthony Minghella, Andrew Lloyd-Webber, Tim Rice, Anne Dudley and Judi Dench all won Academy Awards.

It was also the era of Britpop, when Pulp, Oasis, Blur and Suede not only combined commercial and critical success but did so in a way which was dependent on their Britishness. They brought about a rediscovery of some of best of the country’s back catalogue, from the Beatles and the Kinks to Pink Floyd and Wire. All this was taking place while the so-called Young British Artists—Damien Hirst, Sarah Lucas, Gillian Wearing, Sam Taylor-Wood—were transforming the art world, with Charles Saatchi’s patronage providing momentum.

The fullest extent of the Fukuyama thesis, however, as Francis Young ably articulates, is that the 1990s were unique and in some way definitive, literally “the end of history”. I don’t think I felt that, but I’m also acutely aware that human societies go through these paroxysms from time to time. I could talk about the millenarian movements of 1000 and 1033, or the Fifth Monarchists of 17th century England, but most powerfully it makes me think of Bruce Robinson’s towering tragic masterpiece, Withnail and I (1987).

Although it was filmed in the late summer of 1986, it was set in September 1969, at the dying end of the Sixties, shortly after the iconic Woodstock festival but before the Altamont Free Concert in December, the “death of the hippie dream”. Towards the end of the film, Danny the drug dealer (“Headhunter to his friends. Headhunter to everybody. He doesn’t have any friends”) has a burst of clarity and addresses Wiithnail and Marwood sombrely.

They’re selling hippie wigs in Woolworths, man. The greatest decade in the history of mankind is over. And as Presuming Ed here has so consistently pointed out, we have failed to paint it black.

It’s funny, because Danny’s deadpan, ponderous, drug-addled sincerity is funny, and because of the pun on “Paint It Black”, but there’s a profundity which is heart-wrenching. Bear in mind that Bruce Robinson wrote the (unpublished) novel on which the film was based in the winter of 1969/70, so these are not feelings retrospectively imposed; indeed, there is a great deal of autobiography in the film’s portrayal of struggling young actor Marwood (“I”). More than that, though, it sums up that same sense of the end times, albeit at the other end of the emotional spectrum, that Fukuyama sketched out. Danny has no doubt that he and the others are witnessing a moment which is historic, pivotal and unprecedented, but, for him, the lesson is not one of victory but of failure.

I had never really expected to be uniting Francis Fukuyama and Withnail and I in a single thesis, but they are both relevant, and this is the wider lesson, which has two parts: first, what seems like a powerful zeitgeist will not be felt equally by everyone everywhere, though it is the nature of a zeitgeist that it will seem that way; second, that a zeitgeist will often seem like it is an irreversible changing of the world order, but that may not always be so.

That second point can be demonstrated in a few other instances. I dwell primarily on politics because it is what I know best but there will be other examples. When the House of Commons met after the general election of February 1974, it was in the wake of a messy and inconclusive result. What was certain was that the prime minister, Edward Heath, had been rejected, and the central proposition of his campaign—”Who governs Britain?”—had at least decided it was no longer him. Labour had 301 seats, the Conservatives 297 and the Liberal Party 14, and this seemed to feed into a developing narrative that the traditional political parties were exhausted and that the country was perhaps “ungovernable” in the established sense. The Liberal leader, Jeremy Thorpe, summed it up when he welcomed Selwyn Lloyd back to the Speaker’s chair on re-election.

Looking around the House, one realises that we are all minorities now—indeed, some more than others.

But this was not the dying days of an old era, at least, not in that sense. It would be just over five years until Margaret Thatcher was elected at the head of a Conservative government with a healthy and sustainable majority of 44, the party would be in office for 18 years, and it would be succeeded by a Labour government which lasted 13 years. Majorities were by no means dead.

By a similar token, and this is particularly true for people of my age, by the mid-1990s there was a feeling, euphoric on the right and grudging on the left, that Thatcher’s transformation of the UK’s economy and, more profoundly, of the economic debate, had effectively been victorious. In some ways, Tony Blair’s creation of New Labour and the extent to which it accepted large parts of the Thatcherite settlement, was proof of this. In 2002, Thatcher was asked what her greatest achievement had been. Although she was by that stage in her late seventies and suffering from declining health, she had no hesitation in answering:

Tony Blair and New Labour. We forced our opponents to change their minds.

That seemed then to be true. I reject completely the idea that Blair was merely a crypto-Conservative or that there is no difference between the two major parties, but the scale of Thatcher’s revolution had shifted the Overton Window and there were fundamental elements of politics and economics which the electorate had accepted as no longer part of the debate, as the landscape.

I am still a Thatcherite, but I will say honestly and freely that I underestimated the power of an alternative, stubbornly retrospective world view expressed by Jeremy Corbyn after his surprise election as leader of the Labour Party in 2015. His manifesto for the 2017 general election, For the Many, Not the Few, rekindled arguments and ideologies that I had genuinely thought were dead outside the most isolated academic ivory towers and fringe political groups: widespread renationalisation, a substantially higher rate of income tax for the wealthy, repeal of trades union legislation, abolition of tuition fees and reintroduction of maintenance grants. It was like a late 1970s/early 1980s greatest hits collection. I think much of it is unachievable and a reasonable amount undesirable, but it is equally true that 12,877,918 voters put their support behind it, far more than supported any part at this month’s contest. One can never be sure that an ideological platform is dead.

I have ranged widely but not, I think, without purpose or relevance. To come back to Fukuyama, and Francis Young, my conclusion is this. The End of History was a bold and ambitious assertion that the world had changed in profound ways. It was not wholly born our by events, but its reasoning was sound enough at the time, yet it suits a certain perspective on politics now to suggest that it was more definitive than it was, and more badly flawed. Fukuyama was not the first man, by a long way, to identify a unique time of crisis in the sense most closely connected to its Greek root, κρίσις, of a decision, judgement or turning point, nor will he be the last. But it was not a Year Zero, or even a Decade Zero, and even a curious schoolboy-turned-undergraduate, a voracious reader increasingly eager to understand the world around him, did not assume that history was over.

The 1990s represented a decade of enormous change, much of it permanent and of colossal influence. Whether you were interested in politics or film or music or art, it was exciting, or at least it was exciting to experience it in your teens. Those of us who look back with heart-rending nostalgia are not wholly wrong to see it as a special time. But it was not the end of history. It was not the beginning of an unprecedented new world. And we didn’t all think that it was. We don’t need to make Francis Fukuyama into a straw man or burden him with arguments he never made, much less should we do that just to tear them down. Every day is new, definitionally, and will see developments that are unprecedented in human history; but every day, too, we will see echoes of the past and ripples of consequence from decisions that have been made before. Distinguishing between them, analysing and understanding them and then navigating them to get to the end of that day is the business of politics, and, for each of us, the business of life itself. Good luck with today, and tomorrow, and tomorrow. Macbeth had a point, but there is more to it than “the way to dusty death”.

Well written! Yeah his essay was better than most remember it as (but to be fair, what their remembering was what is in the general zeitgeist of the 1990s, not the specifics of his essay) but at least for the USA, where I'm at, it was still way off because what he was ultimately saying had “won” was nearly completely de-democratized centralized technocratic managerialism, but that had been failing from the get go, it was already performing worse than it predecessor, even according to its own (stupid) key metrics such as real GDP growth, and it performed worse and worse as time went on and it more deeply entrenched itself. We’ve had fifty years of the technocratic dictatorship he was certain was a superior to the semi-populist, semi-decentralized, and semi-democratic systems it had replaced, it a prevailing argument can be now made that it is simply an inferior form of government and has been the entire time